The AI Crawler Protection Paradox: Why Brands Block Bots Then Pay to Be Seen

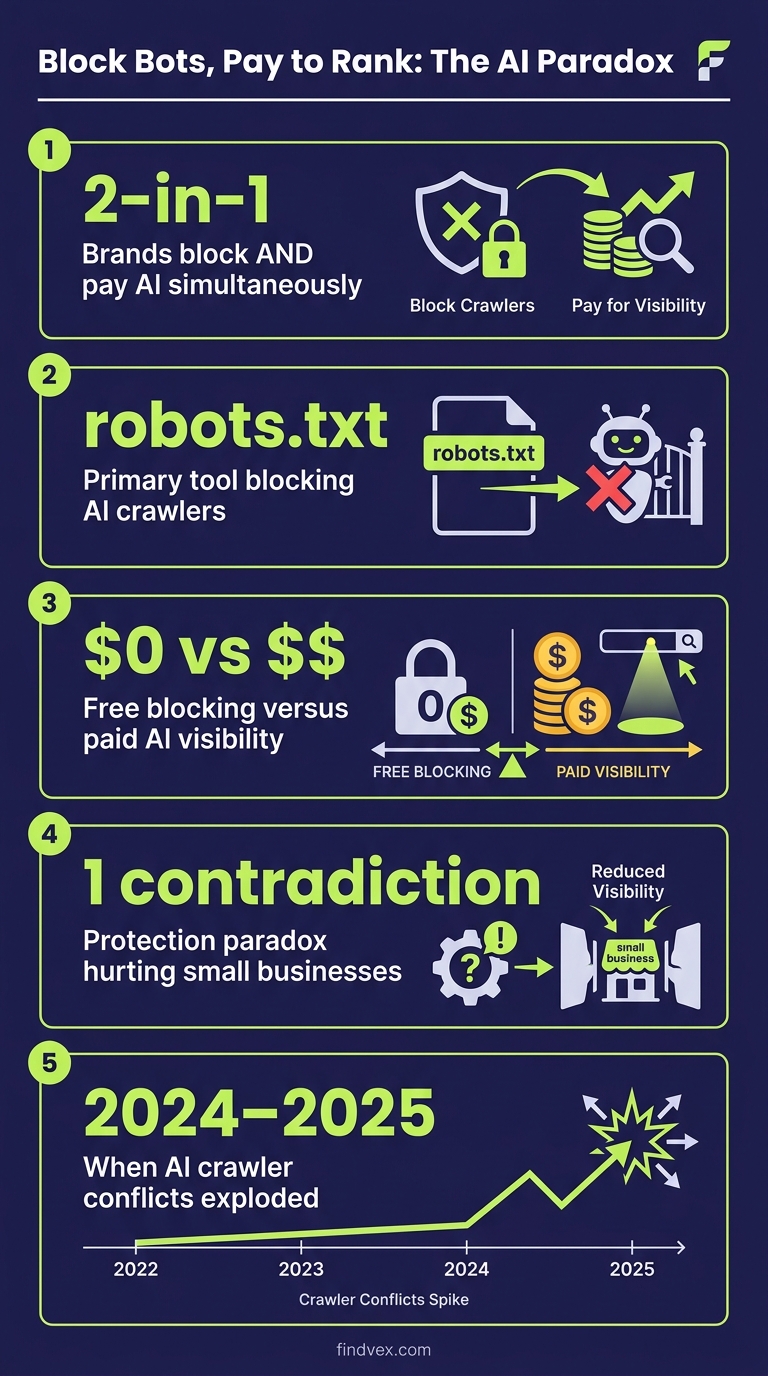

Businesses are simultaneously blocking AI crawlers from scraping their content and paying to appear in AI-generated answers. That contradiction has a name: the protection paradox. Here's what it means technically, and what small businesses should actually do about it.

Quick answer

Some brands are blocking AI crawlers via robots.txt or firewall rules to protect content, then spending budget on paid AI placements or sponsored integrations to stay visible in AI-generated answers. For most small businesses selling products or services, blocking AI crawlers is counterproductive — it removes you from AI-powered search results without protecting anything meaningful. The right move is a selective crawl policy: allow legitimate AI search crawlers, block pure scraper bots, and make your content structurally easy for AI systems to read and cite.

What Is the AI Crawler Protection Paradox?

A pattern has emerged among larger brands that reveals a fundamental contradiction in how companies are responding to AI search: they block AI crawlers to protect their content, then turn around and pay AI platforms to surface their brand in AI-generated answers. The result is a self-inflicted visibility gap — and it costs money twice.

The underlying tension is real. AI systems like ChatGPT Search, Google's AI Overviews, and Perplexity ingest content from across the web to generate answers. If your content feeds those systems, you get cited. If you block them, you disappear from a growing share of search traffic — particularly zero-click queries where users get their answer directly from an AI without visiting any website.

The paradox is this: blocking protects your content from being used for free, but it also removes you from the discovery surface that an increasing portion of your potential customers are using. Some brands have responded by paying for placement after blocking organic access. That's an expensive way to solve a problem that didn't need to exist for most businesses.

How AI Crawlers Actually Work (and Why They're Different from Googlebot)

Traditional search crawlers like Googlebot index your content so it can appear in ranked results. AI crawlers do something different: they ingest content to train language models or to power real-time retrieval systems that generate answers. The distinction matters for your strategy.

There are two broad categories of AI crawler you'll encounter in your server logs and robots.txt decisions:

- Training crawlers — These collect content to train or fine-tune AI models. Examples include Common Crawl bots and various undeclared scrapers. Blocking these has no immediate SEO cost, and there's a reasonable business argument for restricting them if your content is your core product.

- Retrieval crawlers — These power live AI search experiences. GPTBot (OpenAI/ChatGPT), Google-Extended (Google AI training vs. Googlebot for indexing), OAI-SearchBot (ChatGPT Search), PerplexityBot, and ClaudeBot (Anthropic) fall here. Blocking these removes you from AI-powered answer surfaces.

- Hybrid crawlers — Some crawlers serve both purposes. Google's situation is particularly nuanced: Googlebot powers traditional rankings, while Google-Extended feeds Gemini and AI Overviews. These are separate user agents with separate robots.txt directives.

“AI agents do in hours what teams used to do in weeks. The advantage compounds.”

robots.txt Is a Convention, Not a Technical Barrier

This is the part that most coverage glosses over. robots.txt is a voluntary protocol. Legitimate crawlers — Googlebot, GPTBot, PerplexityBot — honor it. Illegitimate scrapers do not. Fastly's research published in mid-2025 confirmed what many in technical SEO have known for years: a meaningful portion of AI scraping traffic comes from bots that ignore disallow directives entirely.

That means your robots.txt strategy is effective against the AI crawlers that are actually beneficial to your visibility, and less effective against the scrapers you most want to stop. It's an asymmetric tradeoff that most blocking discussions fail to acknowledge.

The more robust enforcement layer is at the CDN or WAF (Web Application Firewall) level — tools like Cloudflare's bot management, which can fingerprint and rate-limit suspicious crawlers based on behavioral signals beyond user-agent strings. Cloudflare announced default AI crawler blocking with an optional pay-per-crawl model in July 2025, but as noted by Fastly, that default exclusion carved out Googlebot and Apple's crawler — meaning it doesn't actually stop the two largest contributors to AI-powered search experiences.

Diagnosis Checklist: Is Your Current Crawl Policy Working Against You?

Before you decide whether to block or allow AI crawlers, check where you actually stand. Run through this in your robots.txt file and server logs:

- ☐ Check your robots.txt for blanket disallows — Does it contain

User-agent: *withDisallow: /? If so, you may be blocking everything, including beneficial AI retrieval crawlers. - ☐ Check for Google-Extended directives — If you've added

User-agent: Google-ExtendedwithDisallow: /, you're blocking Gemini and AI Overviews training but not Googlebot. That's a legitimate choice — just confirm it's intentional. - ☐ Verify GPTBot is allowed — ChatGPT Search uses GPTBot for retrieval. If you're blocking it, your content won't appear in ChatGPT Search responses.

- ☐ Check for OAI-SearchBot — This is the separate crawl agent for ChatGPT's real-time search feature. It's different from GPTBot and requires its own directive.

- ☐ Review PerplexityBot and ClaudeBot — If you want Perplexity or Claude to cite you in answers, these must be allowed.

- ☐ Look for WAF or Cloudflare bot rules — Firewall-level blocks override robots.txt. An overzealous rule blocking 'AI' or 'bot' in user-agent strings can catch legitimate retrieval crawlers.

- ☐ Check crawl logs for unrecognized bots — IP addresses that don't match declared user agents are likely scrapers. These won't obey robots.txt regardless.

What to Check in Google Search Console

Google Search Console doesn't provide direct visibility into AI crawler activity, but it surfaces the symptoms of misconfigured crawl policies:

- Coverage → Excluded → Blocked by robots.txt — If you see key pages here, your robots.txt is blocking Googlebot from indexing content that should rank. This is the most urgent fix.

- Coverage → Crawl stats — A sudden drop in crawl frequency can indicate your site accidentally started blocking crawlers, or that a CDN rule is interfering.

- URL Inspection → Test Live URL — Use this to verify that Googlebot can access your most important pages after any robots.txt change.

- Security & Manual Actions — Unrelated to AI crawlers directly, but worth checking if you've recently made server-level bot changes, as some overzealous configurations trigger false positives.

- Note: Google Search Console does not show data for GPTBot, PerplexityBot, or ClaudeBot activity. For that, you need server log access or a CDN-level analytics tool.

A Practical Framework: What Should Small Businesses Actually Do?

The right crawl policy depends on your business model. The protection paradox mostly affects media publishers and content-as-product businesses, not the typical small business selling services or products locally. Here's how to think through it:

- You sell products or services (not content): Allow all legitimate AI retrieval crawlers. Being cited in ChatGPT Search, Perplexity, or AI Overviews is free advertising. Block only clearly abusive scrapers via WAF rules targeting behavioral anomalies, not user-agent strings.

- You monetize content through advertising or subscriptions: You have a genuine reason to restrict training crawlers. Block Google-Extended, Common Crawl, and similar training bots. But carefully evaluate whether you also want to block retrieval crawlers — doing so removes you from AI answer surfaces entirely, which is a real traffic tradeoff.

- You operate a local business: AI search is increasingly handling 'near me' and local intent queries. Blocking AI crawlers removes you from answers to questions like 'best HVAC company in Austin' generated by AI systems. This is almost never worth doing.

- You have proprietary data or research: Consider selective authentication — put your most valuable content behind a login so it's not crawlable at all, rather than relying on robots.txt which scrapers ignore.

The Pay-to-Play Layer: What Sponsored AI Visibility Actually Is

The 'paying to get seen' half of the paradox refers to a few distinct mechanisms, which are worth distinguishing clearly:

Cloudflare Pay Per Crawl: Announced July 2025, this is a proposed marketplace model where AI companies pay per crawl request to access content behind Cloudflare's network. As of the time of writing, this is an emerging infrastructure model, not a widely deployed advertising product. It's more analogous to content licensing infrastructure than to ad buying.

Paid AI search placements: Some AI platforms are beginning to test sponsored placements within AI-generated answers — analogous to how Google shows ads alongside organic results. This is a genuinely new paid channel that's early-stage across the industry.

Content licensing deals: Larger publishers have negotiated direct licensing agreements with AI companies (the Reddit-Google deal being the most cited example). This is not a small-business option at this stage.

For most small businesses, none of these paid mechanisms are currently accessible or cost-effective. The practical priority is ensuring your content is structured to be cited organically — which means clean crawlability, clear entity markup, and content that directly answers the questions your customers ask.

Developer Handoff Notes: How to Implement a Selective Crawl Policy

If you're passing this to a developer or web admin, here's a precise implementation spec. Risk level is noted for each change.

- robots.txt — Allow retrieval bots explicitly (Risk: Low): Add explicit

Allowdirectives for GPTBot, OAI-SearchBot, PerplexityBot, and ClaudeBot. Don't rely on a blanketUser-agent: *Allow — be explicit so future additions to your file don't accidentally catch these agents. - robots.txt — Block training-only crawlers (Risk: Low for non-Google training bots): Add

User-agent: Google-ExtendedwithDisallow: /only if you've made a deliberate business decision about AI Overviews training. This does not affect traditional Google ranking. - Cloudflare / WAF rules — Behavioral blocking (Risk: Medium — test in log mode first): Create rules that flag crawlers hitting your site at inhuman request rates (e.g., >100 requests/minute from a single IP) rather than matching user-agent strings. Run in log/observe mode for 48 hours before enforcing.

- Structured data — JSON-LD on all key pages (Risk: None): Ensure every service page, location page, and key content page has appropriate schema markup. This helps AI systems understand entity relationships and cite you accurately. See our technical SEO audit checklist for schema implementation guidance.

- Test after every change: Use Google's URL Inspection tool and a robots.txt tester to verify Googlebot access is unaffected before deploying any crawl policy change to production.

What the Protection Paradox Means for Your 2026 SEO Strategy

The deeper implication here is that SEO strategy now requires explicit decisions about which discovery surfaces you want to appear on, and that those surfaces increasingly include AI systems that operate outside the traditional Googlebot-to-rankings pipeline.

A site that is technically healthy for traditional search — fast, crawlable, well-structured — is already most of the way to being AI-crawler-friendly. The additional steps are deliberate: allow the right bots, implement structured data correctly, and write content that answers questions directly rather than just optimizing for keyword density.

If you're working through the broader picture of how search is changing for small businesses, our piece on the 2026 SEO strategy small businesses should actually follow covers the strategic layer in more detail. And if crawl budget and indexing fundamentals are areas you haven't fully locked down yet, the technical SEO audit checklist for small business websites is the right starting point before you start making robots.txt changes.

FAQs

Will blocking AI crawlers hurt my Google rankings?

Blocking Googlebot will hurt your Google rankings — but Googlebot is a separate user agent from Google's AI crawlers like Google-Extended. You can block Google-Extended (which feeds Gemini and AI Overviews training data) without affecting Googlebot and traditional rankings. The risk is that blocking Google-Extended may reduce your appearance in AI-generated answers and AI Overviews. Blocking GPTBot, PerplexityBot, or ClaudeBot has no effect on Google rankings but removes you from those AI platforms' answer surfaces.

How do I know which AI crawlers are currently visiting my site?

Check your server access logs or CDN analytics (Cloudflare, Fastly, etc.) and filter by user-agent strings. Common AI crawlers to look for: GPTBot, OAI-SearchBot, PerplexityBot, ClaudeBot, Google-Extended, Amazonbot, and Bytespider. Any crawler hitting your site at high volume with an unrecognized user agent and no matching reverse-DNS record is likely an undeclared scraper.

Does robots.txt actually stop AI scrapers?

It stops legitimate crawlers that honor the protocol — Googlebot, GPTBot, PerplexityBot, and most declared AI bots. It does not stop undeclared scrapers, which a significant share of unwanted AI traffic represents. For stronger enforcement, use WAF-level rules based on behavioral signals (request rate, IP reputation, missing browser headers) rather than relying solely on robots.txt.

What is Cloudflare's Pay Per Crawl and should small businesses care about it?

Cloudflare's Pay Per Crawl is an infrastructure model announced in July 2025 that would allow site owners to charge AI companies per crawl request, rather than simply blocking or allowing access. For small businesses, this is not currently an actionable product — it's an emerging industry mechanism aimed primarily at large publishers and media companies. Monitor it, but don't delay your current crawl policy decisions waiting for it to mature.

Should a local business block AI crawlers?

Almost certainly not. Local businesses benefit from AI systems recommending them in response to queries like 'best electrician near me' or 'dentist accepting new patients in [city].' Blocking AI retrieval crawlers removes you from those recommendation surfaces without meaningful upside. The right approach is to ensure your content is structured clearly, your Google Business Profile is complete, and your on-site content directly answers common local questions.

How is Google-Extended different from Googlebot?

Googlebot is the crawler that powers traditional Google Search indexing and rankings. Google-Extended is a separate user agent that Google uses to collect training data for Gemini models and to power certain AI Overview features. You can block Google-Extended in robots.txt without affecting Googlebot — but doing so may reduce how often your content appears in AI-generated Google answers.

Sources & Citations

- https://www.fastly.com/blog/the-truth-about-blocking-ai-and-how-publishers-can-still-win

- https://searchengineland.com/cloudflare-to-block-ai-crawlers-by-default-with-new-pay-per-crawl-initiative-457708

- https://www.fastly.com/blog/the-truth-about-blocking-ai-and-how-publishers-can-still-win

- https://www.searchenginejournal.com/how-brands-block-ai-crawlers-then-pay-to-get-seen-the-protection-paradox/572267/

Marcus Chen

Writing about AI, search, and what actually moves the needle for US small businesses.

Related reads

OpenAI Crawl Activity Tripled Since GPT-5: What It Means for Your Website

OpenAI's crawl activity roughly tripled following the GPT-5 launch, with OAI-SearchBot overtaking GPTBot in log-file events. If you haven't audited your robots.txt or server logs for AI crawler behavior recently, now is the time.

industry-seo-playbooksReal Estate SEO: A Practical Guide for Small Businesses

Real estate SEO helps agents, brokerages, and property managers rank in local Google searches so buyers and sellers find them first. This guide covers everything from Google Business Profile to IDX optimization and neighborhood content—practical steps you can start this week.

local-seo-for-service-businessesNear Me SEO: A Practical Guide for Small Businesses

"Near me" searches connect high-intent buyers to local businesses — but ranking for them requires more than just adding the phrase to your website. This guide covers every lever you can pull, from your Google Business Profile to on-page signals and review strategy.